Building Repository-Scale AI Coding Agents: What the RepomindAI Project Teaches Us About the Future of Development

Building Repository-Scale AI Coding Agents: What the RepomindAI Project Teaches Us About the Future of Development

If you've been paying attention to recent AI developments in the developer tools space, you've probably noticed a frustrating limitation: most coding assistants work at the file or function level. They're helpful, sure, but they lack the complete picture of your entire codebase. That's where context comes in—and where RepomindAI takes an interesting approach.

The Context Problem in Modern Development

Here's a scenario you've probably experienced: you're working in a monorepo with hundreds of files, and you need an AI assistant to refactor a component. The assistant understands that file, but it doesn't understand how your module connects to seventeen other services, how your shared utilities work, or what architectural patterns define your project.

Traditional language models have had hard context limits. GPT-4 gave you 8K tokens initially, then 32K, then 128K. But understanding a complex repository with multiple interconnected services? That often requires looking at test files, configuration files, documentation, dependency trees, and implementation details across dozens of files simultaneously.

Enter 256K Context Windows and AMD MI300X

The RepomindAI project leverages the AMD MI300X GPU, which enables what researchers are calling "repo-scale" understanding. With a 256K context window, you're not just looking at one file anymore—you can load entire subsystems into memory at once.

To put this in perspective: a 256K context window is roughly 190,000 words. That's equivalent to reading a full-length novel in a single sitting. For code repositories, it means you can load:

- Multiple interdependent modules

- Complete test suites

- Architecture documentation

- Configuration files across your entire project

- API definitions and contracts

- Shared utilities and libraries

All at the same time.

The FP8 Efficiency Play

But there's another clever part of this equation: FP8 quantization. Traditional large language models use FP32 (32-bit floating-point) precision for their calculations. FP8 reduces that to 8-bit precision, which sounds like it would destroy accuracy, but modern quantization techniques have proven that most AI models can maintain performance with this reduced precision.

Why does this matter? Efficiency. FP8 means:

- Faster inference: Smaller numerical operations compute quicker

- Lower memory overhead: More room for longer context windows in the same hardware

- Reduced power consumption: Important when you're running GPU-intensive workloads

- Better economics: Cheaper to run at scale

This is particularly relevant for developers thinking about deploying AI agents in production environments. Every millisecond saved in inference time translates to better user experience. Every bit of memory saved means you can serve more concurrent users.

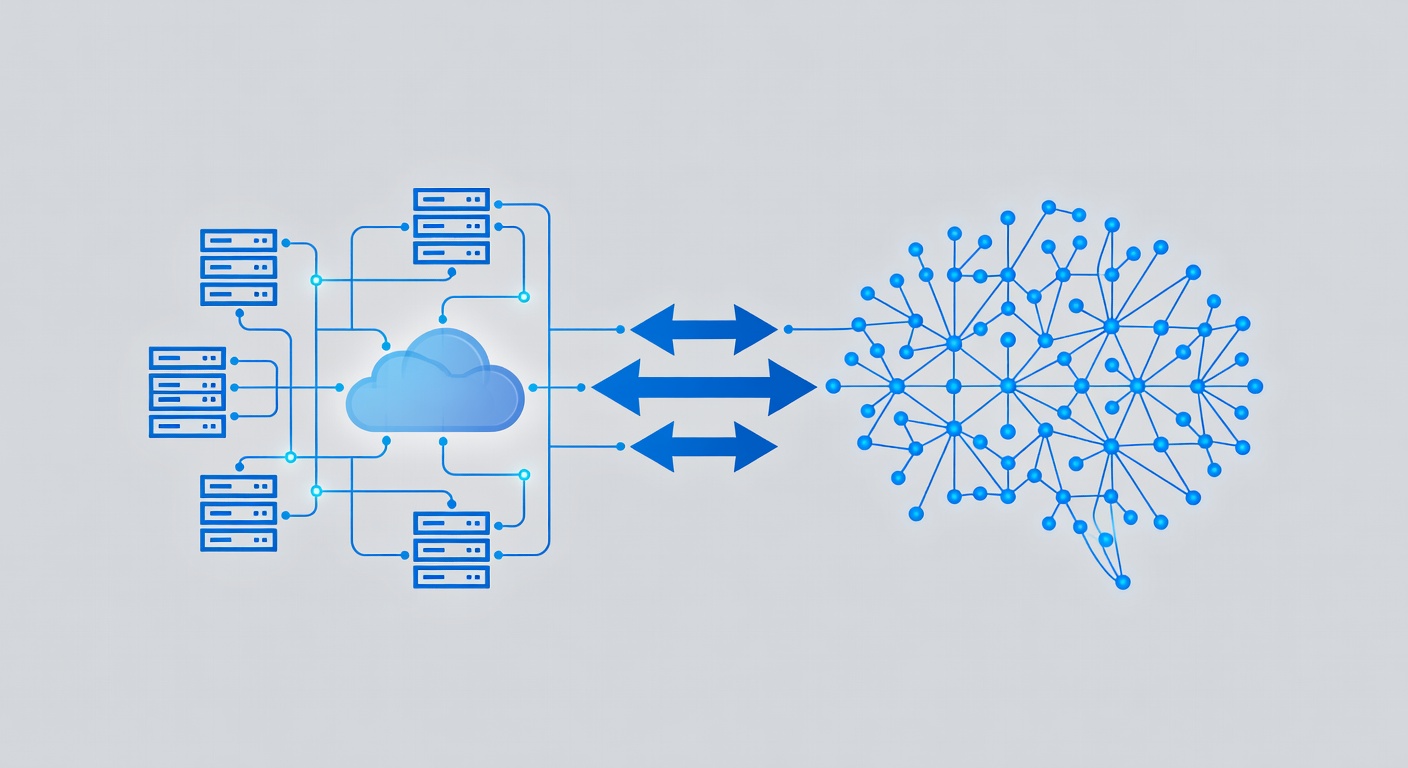

The Architecture of an Intelligent Coding Agent

What makes RepomindAI different from simply throwing a long context window at a language model? The project appears to focus on agentic behavior—essentially, building a system that doesn't just generate code, but actively reasons about your repository structure and problem-solving approach.

A true repo-scale coding agent should be able to:

- Map dependencies: Understand which files import from which, and why

- Identify patterns: Recognize architectural patterns and code styles specific to your project

- Reason about impact: Know that changing Module A might affect Services B, C, and D

- Generate contextual solutions: Not just fix syntax, but suggest changes that align with your codebase's philosophy

- Ask clarifying questions: Request more information when context is ambiguous

This is fundamentally different from code completion or simple refactoring suggestions.

Why This Matters for Your Infrastructure

If you're running a startup or managing a development team, consider what a truly intelligent repository-scale coding agent could do:

Onboarding acceleration: New developers could upload their codebase and get instant architectural walkthroughs, documentation generation, and guided introductions to critical systems.

Refactoring confidence: Large-scale refactoring projects become less risky when the AI understands the entire dependency graph and can identify all potential breaking changes.

Documentation generation: Automated, accurate documentation that reflects the actual state of your code (not outdated README files).

Architectural review: AI analysis of your repository structure to suggest improvements, identify technical debt, and flag potential issues.

Cross-service debugging: When problems span multiple services, an AI with full repository context can trace issues more effectively than a developer hunting through logs.

The Open Source Opportunity

What's particularly interesting about RepomindAI is its open-source nature. This isn't a closed proprietary system from a major cloud provider—it's community-driven research demonstrating what's possible when you combine:

- Accessible open-source AI models

- Consumer-accessible GPUs (AMD's MI300X is aiming for broader availability)

- Thoughtful engineering around context management and quantization

- Community contributions and improvements

This democratization matters. It means startups and independent developers can experiment with these concepts without paying cloud giants for every token processed. It means you could potentially run these systems on your own infrastructure, maintaining full control over sensitive codebases.

Looking Ahead: The AMD Developer Hackathon 2026

The project was built for the AMD Developer Hackathon, which signals an important trend: hardware manufacturers are investing in developer tools and AI infrastructure. This isn't just about raw GPU power anymore—it's about building ecosystems where developers can innovate with cutting-edge AI capabilities.

For anyone interested in AI-assisted development, domain management, or building on-premises AI infrastructure, watching projects like RepomindAI is essential. They show us the practical state of what's possible right now, not ten years from now.

What This Means for Your Tech Stack

Whether you're evaluating hosting providers, considering AI integration into your development workflow, or simply staying informed about where the industry is heading, projects like RepomindAI offer valuable insights:

- Context is king: Longer context windows fundamentally change what's possible in AI-assisted development

- Efficiency matters: FP8 quantization and optimization techniques make advanced AI practical at smaller scales

- Open source drives innovation: Community-built tools often move faster than corporate initiatives

- GPU architecture matters: AMD's approach to making powerful hardware accessible could reshape the AI development landscape

The future of coding assistance isn't about having a smart auto-complete. It's about having a collaborative system that understands your entire project, your architectural choices, your patterns, and your constraints.

RepomindAI is just the beginning.